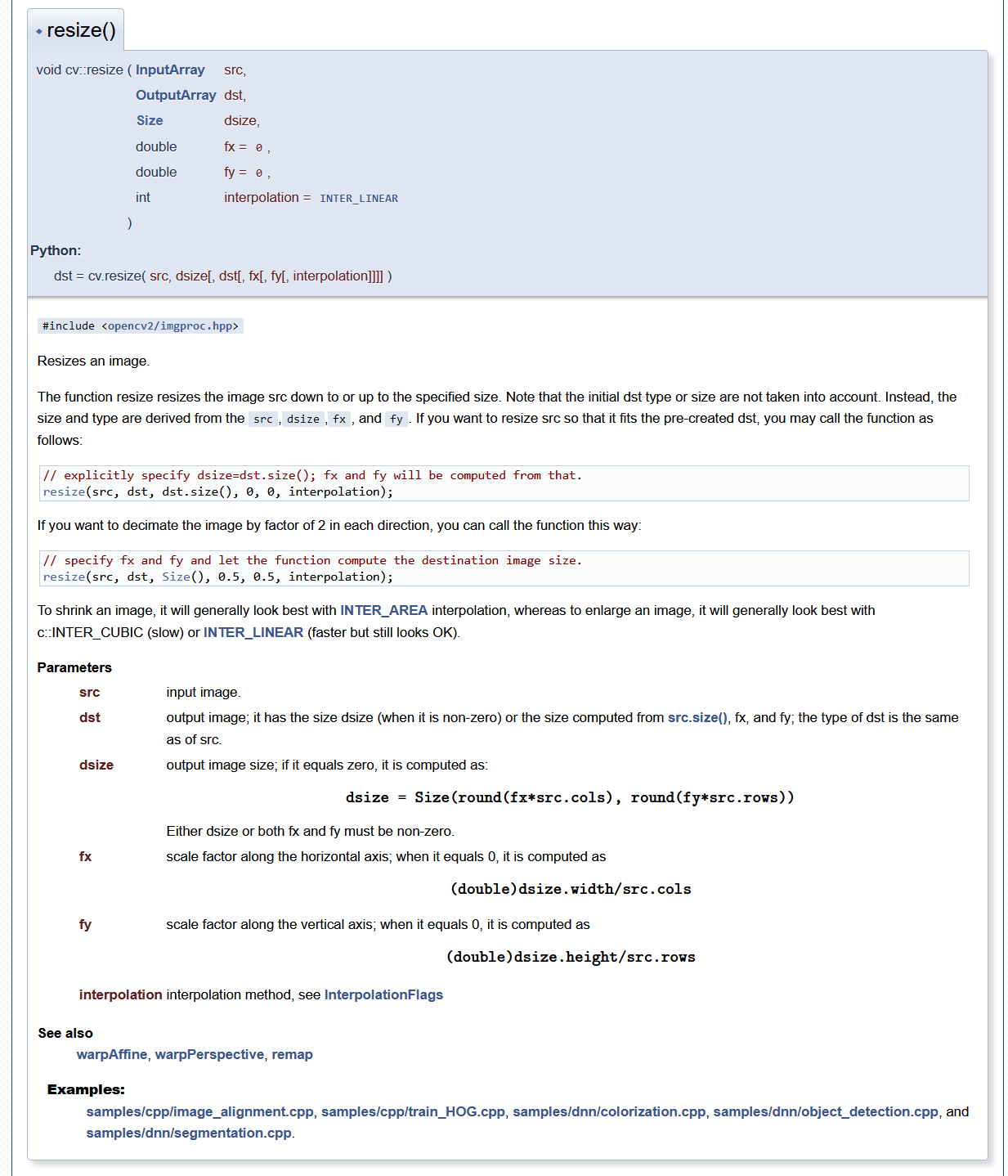

(In this case is only 3%, top class has 0.7 with scikit + Keras and 0.66 with OpenCV). (Btw, the parameters fx and fy denote the scaling factor in the function below.) img cv2.resize (img, None, fx0.5, fy0.5, interpolationcv2.

If you’re interested in shrinking your image, INTERAREA is the way to go for you. Scikit image uses by default interpolation bi-linear, the same used by OpenCV, so it should be the same, I also tried every possible value for parameter mode, I can never obtain the same result between scikit and OpenCV.Īltough the values seems only slightly different, when passed to the network, the network produce a different result, which I noticed can be up to 12% in classification probalility. The images that are rescaled are either shrunk or enlarged. Try this: imgarray cv2.resize (imgarray, dsize (299, 299), interpolationcv2.INTERCUBIC) You can find the detailed explanation here: Explanation of cv2. Upper left pixel value is: 145.91113, 76.853516, 92.67676īut with scikit-image: img = resize(imread(img_path, as_grey=False), (80, 80), preserve_range=True, mode='constant') 2 Answers Sorted by: 5 I think the error comes from the dimension that requires only the width and height. The first is the image that you want to convert. New_image = cv2.resize(image32,(80,80),interpolation=cv2.INTER_LINEAR) In OpenCV, we use the cv2.resize() function to perform an image resize. Note that the initial dst type or size are not taken into. Image32 = cv2.imread(image).astype(np.float32)Ĭv2.cvtColor(image32,cv2.COLOR_BGR2RGB,image32) The function resize resizes the image src down to or up to the specified size. I think the problem is that Resize function of OpenCV (used internally by blobFromImage) produce a different result from scikit-image resize (I'm not saying is bugged, just want to obtain same results bewteen OpenCV and Sciki-Image, doing something in one or in another), for example for this image: My final application will use OpenCV in C++, so I need to match this snippets, as my network has been trained with data generated by scikit-image. Network is the same, input data is the same, but the results is slightly different and the reason is that resize function behaves differently between scikit-image and OpenCV (used internally by blobFromImage) and don't know how to adapt the OpenCV code to match scikit-image. Python3 def concatvh (list2d): return cv2.vconcat ( cv2. The problem is that I cannot get the same data to pass to the network (DNN module in case of OpenCV). cv2.imshow ('hconcatresize.jpg', imghresize) Output: hconcatresize.jpg Concatenate images of the same size vertically and horizontally: images can be combined using both cv2.hconcat () and cv2.vconcat () in tile form using a 2D list. Model = ('mynet.prototxt', 'mynet.caffemodel') Image = resize(imread(img_path, as_grey=False), (80, 80), preserve_range=True, mode='constant')

Scikit-Image + Keras from keras.models import model_from_json I'm trying to reproduce the same output with these snippets:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed